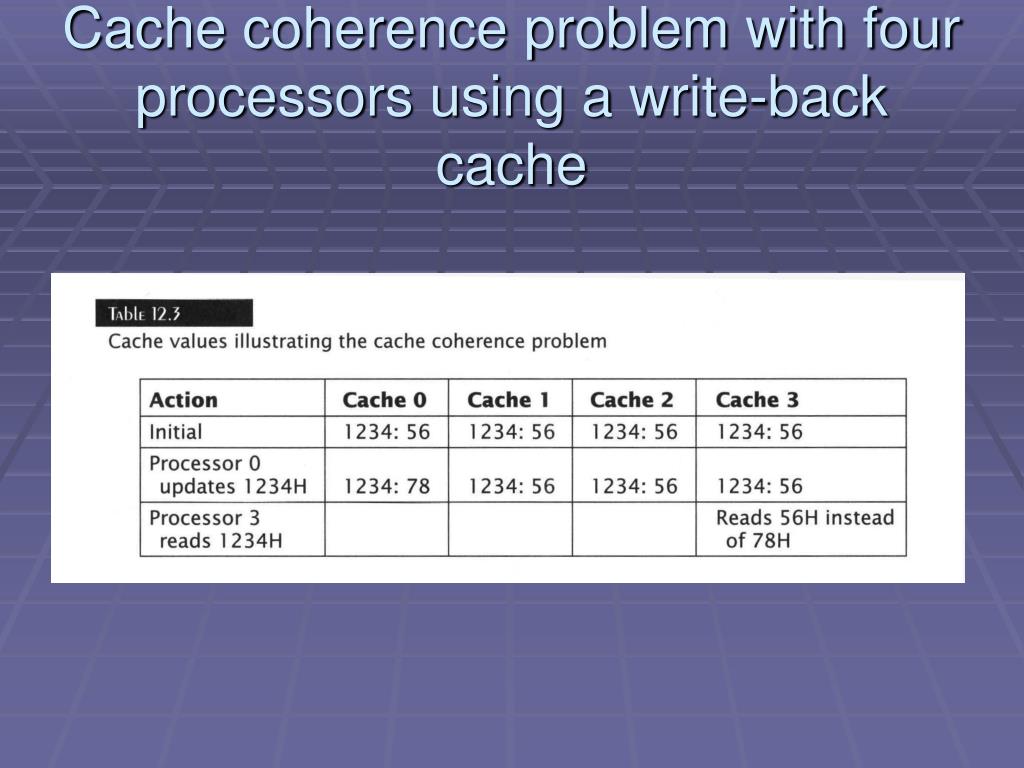

This is generally referred to as the cache coherence or multi-cache consistency problem. If a multiprocessor has an independent cache in each processor, there is a possibility that two or more caches containing different values of the same location (variable) at the same time.

Each processor then views the memory through its own individual cache. Thus, each processor has its own private cache which allows both data and instructions to be accessed by the respective processor without using the shared system bus. In shared-bus multiprocessor system using shared memory, the introduction of caches with/without local memories to each processor is a compulsion to reduce the average access time in each processor, and to reduce the contention for the shared system bus. All these local buses are, in turn, connected with the main shared system bus. Local memory supports caching of both private and shared data.

To improve the performance considerably, each CPU is, however, provided with a cache or a local memory connected by a dedicated local bus which can even include local IO devices. That can be included in such a system without an unacceptable degradation in performance. This severely limits the number of processorsīasic structure of a centralized shared-memory multiprocessor.īasic architecture of a distributed memory machine containing individual nodes (= processor + some memory + typically some I/O and interface to interconnection network). Whatever is the memory-module design, typically, all processing elements (CPU and I/O processors), main-memory modules (shared or distributed), and I/O devices are attached directly with the single shared bus that eventually results in potential communication bottleneck, leading to contention and delay whenever two or more units try to access the main memory simultaneously. An illustration of such an architecture is shown in Figure 4.34. To support larger processor counts, memory must be distributed among the processors, with each processor having a part of memory of its own to support the bandwidth demands. Another approach consists of multiple processors with physically distributed memory. This type of LIMA architecture consisting of a centralized shared memory connected with a small number of processors (CPU and IOP) and supported by large caches which significantly reduce the bus bandwidth requirements, is currently by far the most popular organisation, and is extremely cost-effective. In this architecture, each processor as shown in Figure 4.33 has been given uniform access to this single shared-memory, and hence, these machines are sometimes called to have uniform memory access (UMA) architecture. In the mid-1990s, with large caches, the bus, and the single memory can satisfy the memory demands of a multiprocessor built with a small number of processors. The emerging dominance of the microprocessors blessed by the radical developments in VLSI technology in the beginning of the 1980s motivated many designers to create small- scale multiprocessors where several processors were on the motherboard, shared a single physical memory built around a single shared bus.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed